A year ago, we wrote a blog titled “AI is harming our planet: addressing AI’s staggering energy cost” to shed light on the alarming energy demands of AI and introduce brain-based techniques to potentially mitigate these environmental concerns. Today, as the prominence of AI applications and technologies like ChatGPT and Large Language Models (LLMs) continue to soar, the importance of this discussion has only escalated.

On its current trajectory, AI will only accelerate the climate crisis. In contrast, our brains are incredibly efficient, consuming around 20 watts of power, about as much as it takes to power a light bulb. If we can apply neuroscience-based techniques to AI, there is enormous potential to dramatically decrease the amount of energy used for computation and thus cut down on greenhouse gas emissions. In light of the advancements that have shaped the AI landscape over the past year, this blog post aims to revisit the environmental concerns underscored in the original post, and how brain-based techniques can address AI’s incredibly high energy cost.

Why does AI consume so much energy?

First, it is worth understanding how a deep learning model works in simple terms. Deep learning models, like LLMs, are not intelligent the way your brain is intelligent. They don’t learn information in a structured way. Unlike you, they can’t interact with the world to learn cause-and-effect, context, or analogies. Deep learning models can be viewed as “brute force” statistical techniques that thrive on vast amounts of data.

For example, if you want to train a deep learning model to understand and write about a cat, you show it thousands of text samples related to cats. The model does not understand that a cat purrs or is more likely than a dog to play with a feather. Even if it can output text stating that a cat purrs, it does not understand purring in the same way a child does, who’s played with a cat and dog and learned their differences over the space of an hour. The model cannot understand the world just by examining how words and phrases appear together. To make inferences, it needs to be trained on massive amounts of data to observe as many combinations as possible.

The enormous energy requirement of these brute force statistical models is due to the following attributes:

- Requires millions or billions of training examples. In the cat example, sentences are needed that describe cats from different perspectives and contexts. Sentences are needed of different breeds, colors and shadings. Sentences are needed about the different environments where cats might be found. There are so many different ways to describe a cat and the model must be trained on volumes of information related to millions of different concepts that involve cats.

- Requires many training cycles. The process of training the model involves learning from errors. If the model has incorrectly predicted that a story about a cat ended with it happily enjoying a cold bath, the model readjusts its parameters and refines its classification, then retrains. It learns slowly from its mistakes, which requires many iterations through the entire dataset.

- Requires retraining when presented with new information. If the model is now required to write about dogs, which it has never learned about before, it can use the context provided in the prompt about dogs to improvise on the fly. However, it might produce inaccuracies and won’t retain this new information for future use. Alternatively, it can be trained on this new data, but at the cost of forgetting its prior knowledge, i.e. details about cats. To write about cats and dogs, the model will need to be retrained from the start. It will need to have descriptions about the breeds of dogs and their behaviors added to the training set and be retrained from scratch. The model cannot learn incrementally.

- Requires many weights and lots of multiplication. A typical neural network has many connections, or weights, that are represented by matrices. For the network to compute an output, it needs to perform numerous matrix multiplications through subsequent layers until a pattern emerges at the end. In fact, it often takes millions of steps to compute the output of a single layer! A typical network might contain dozens to hundreds of layers, making the computations incredibly energy intensive.

How much energy does AI consume?

A paper from the University of Massachusetts Amherst stated that “training a single AI model can emit as much carbon as five cars in their lifetimes.” Yet, this analysis pertained to only one training run. When the model is improved by training repeatedly, the energy use will be vastly greater. Many large companies, which can train thousands upon thousands of models daily, are taking the issue seriously. This paper by Meta is a good example of one such company that is exploring AI’s environmental impact, studying ways to address it, and issuing calls to action.

The latest language models include billions and even trillions of weights. GPT-4, the LLM that powers ChatGPT, has 1.7 trillion machine learning parameters. It was said to have taken 25,000 Nvidia A100 GPUs, 90-100 days and $100 million to train the model. While energy usage has not been disclosed, it’s estimated that GPT-4 consumed between 51,773 MWh and 62,319 MWh, over 40 times higher than what its predecessor, GPT-3, consumed. This is equivalent to the energy consumption over 5 to 6 years of 1,000 average US households.

It is estimated that inference costs and power usage are at least 10 times higher than training costs. To put this into perspective, in January, ChatGPT consumed roughly as much electricity per month as 26,000 US households for inference. As the models get bigger and bigger to handle more complex tasks, the demand for servers to process the models grows exponentially.

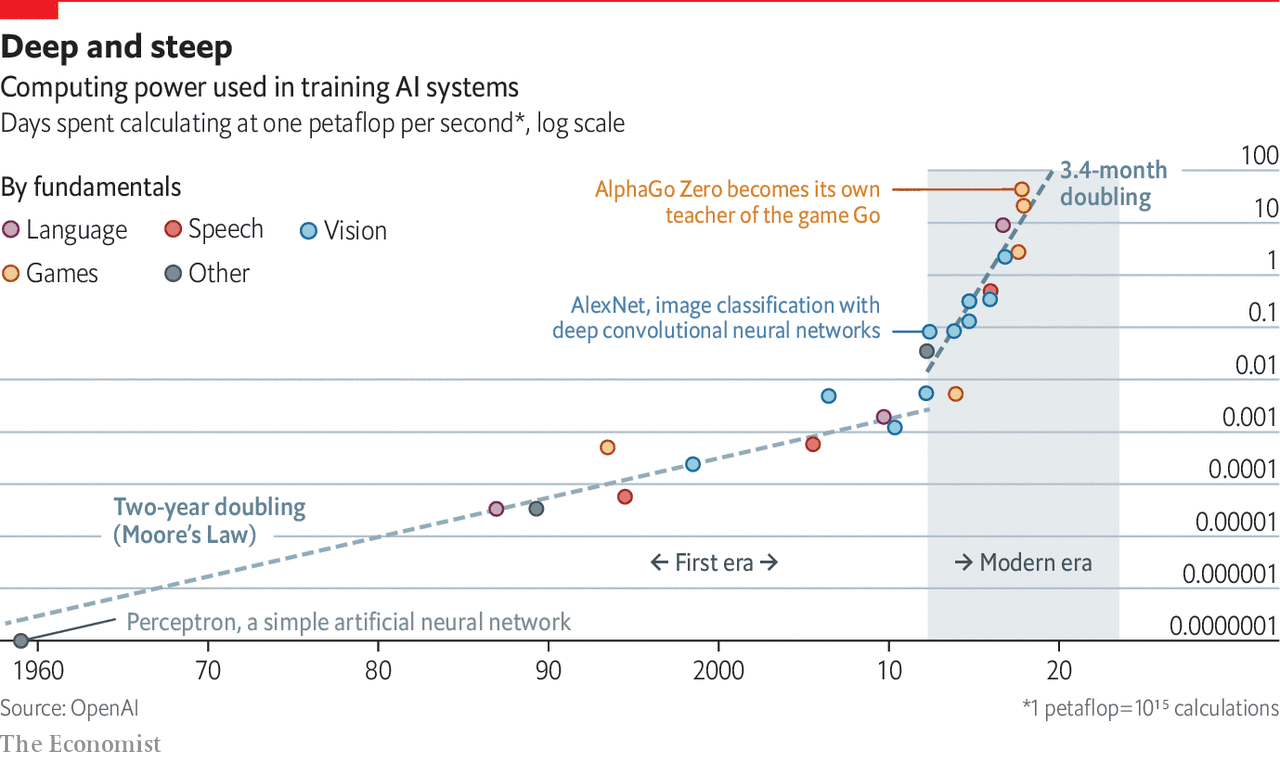

Since 2012, the computational resources needed to train these AI systems have been doubling every 3.4 months. One business partner told us that their deep learning models could power a city. This escalation in energy use runs counter to the stated goals of many organizations to achieve carbon neutrality in the coming decade.

How can we reduce AI’s carbon footprint?

We propose to address this challenging issue by taking lessons from the brain. The human brain is the best example we have of a truly intelligent system, yet it operates on very little energy, essentially the same energy required to operate a light bulb. This efficiency is remarkable when compared to the inefficiencies of deep learning systems.

How does the brain operate so efficiently? Our research, deeply grounded in neuroscience, suggests a roadmap to make AI more efficient. Here are several reasons behind the brain’s remarkable capability to process data without using much power.

1/ Sparsity

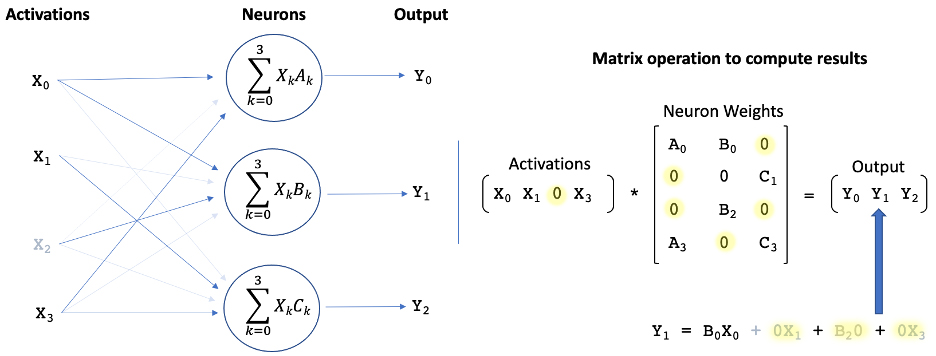

Information in the brain is encoded as sparse representations, i.e., a long string of mainly zeros with a few non-zero values. This approach is different than typical representations in computers, which are normally dense. Because sparse representations have many zero elements, these can be eliminated when multiplying with other numbers, with only the non-zero values being used. In the brain, representations are extremely sparse: as many as 98% of the numbers are zero. If we can represent information in artificial systems with similar levels of sparsity, we can eliminate a huge number of computations. We have demonstrated that using sparse representations in inference tasks in deep learning can decrease power consumption 3-100 times, depending on the network, the hardware platform, and the data type, without any loss of accuracy.

A Closer Look: Applying sparsity to machine learningThere are two key aspects of brain sparsity that can be translated to DNNs: activation sparsity and weight sparsity. Sparse networks can constrain the activity (activation sparsity) and connectivity (weight sparsity) of their neurons, which can significantly reduce the size and computational complexity of the model.

|

2/ Structured data

Your brain builds models of the world through sensory information streams and movement. These models can capture the 3-D structure of incoming data, such that your brain understands that the view of the cat’s left side and the view of the cat’s right side do not need to be learned independently. The models are based on something we call “reference frames.” Reference frames enable structured learning. They allow us to build models that include the relationships between various objects and concepts. We can incorporate the notion that cats may have a relationship to trees or feathers without having to read millions of instances of cats with trees. Building a model with reference frames requires substantially fewer samples than deep learning models. With just a few example descriptions of a cat, the model should be able to transpose the data to understand alternate descriptions of the cat, without being trained specifically on those descriptions. This approach will reduce the size of training sets by several orders of magnitude.

A Closer Look: Structured learning with reference framesReference frames are like gridlines on a map, or x,y,z coordinates. Every fact you know is paired with a location in a reference frame, and your brain is constantly moving through reference frames to recall facts that are stored in different locations. This allows you to move, rotate and change things in your head. If someone asked you to describe a blue cartoon cat, you could do it easily. You’d imagine it instantly, based on your reference frame of what a cat looks like in real life and the color blue. There’s very little chance you would describe a blue whale, or a cartoon Smurf.

|

3/ Continual learning

Your brain learns new things without forgetting what it knew before. When you read about an animal for the first time, say a coyote, your brain does not need to relearn everything it knows about mammals. It adds a reference frame for the coyote to its memory, noting similarities and differences from other reference frames, such as a dog, and sharing common behaviors and actions, such as hunting. This small incremental training requires very little power.

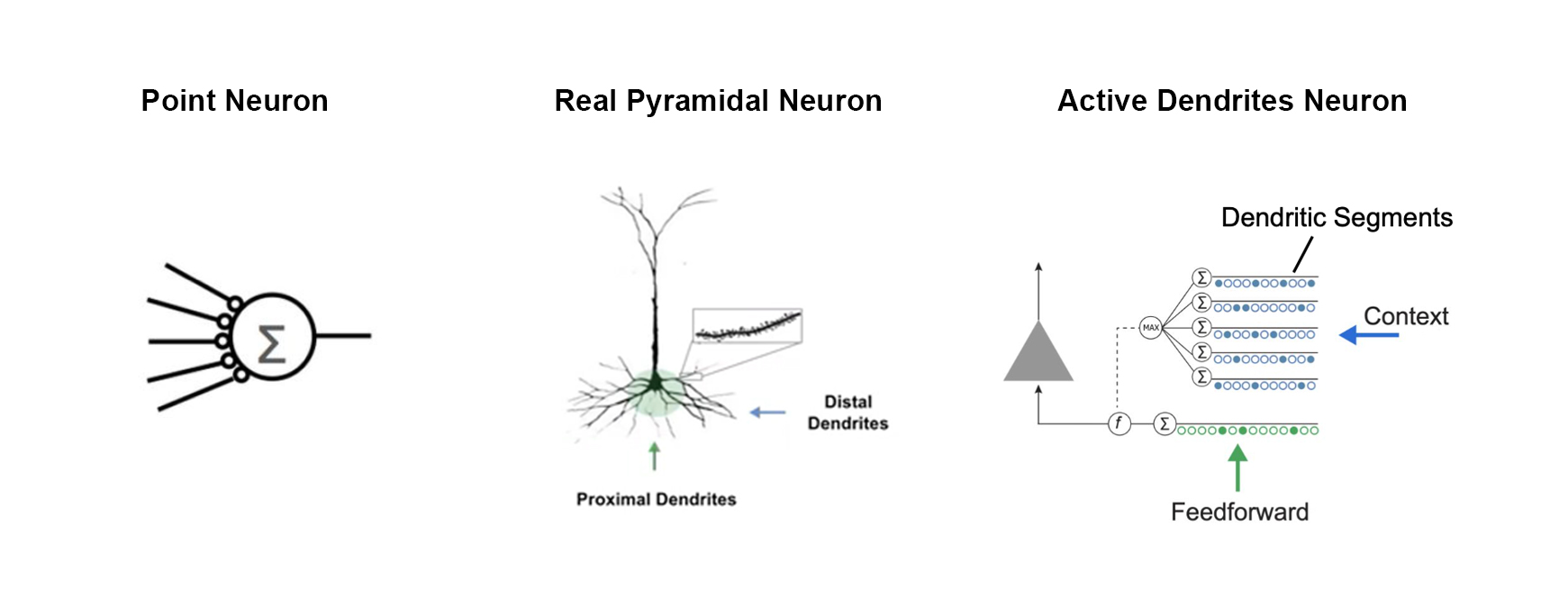

A Closer Look: Multi-task and continual learning with active dendritesA biological neuron has two kinds of dendrites: distal and proximal. Only proximal dendrites are modeled in the artificial neurons we see today. We have demonstrated that by incorporating distal dendrites into the neuron model, the network is able to learn new information without wiping out previously learned knowledge, thereby avoiding the need to be retrained.

|

4/ Optimized hardware

Today’s semiconductor architecture is optimized for deep learning, where networks are dense and learning is unstructured. But if we want to create more sustainable AI, we need hardware that can incorporate all three of the above attributes: sparsity, reference frames, and continual learning. We have already created a few techniques for sparsity. These techniques map sparse representations into a dense computing environment and improve both inference and training performance. Over the long run, we can imagine architectures optimized for these brain-based principles that will have the potential to provide even more performance improvements.

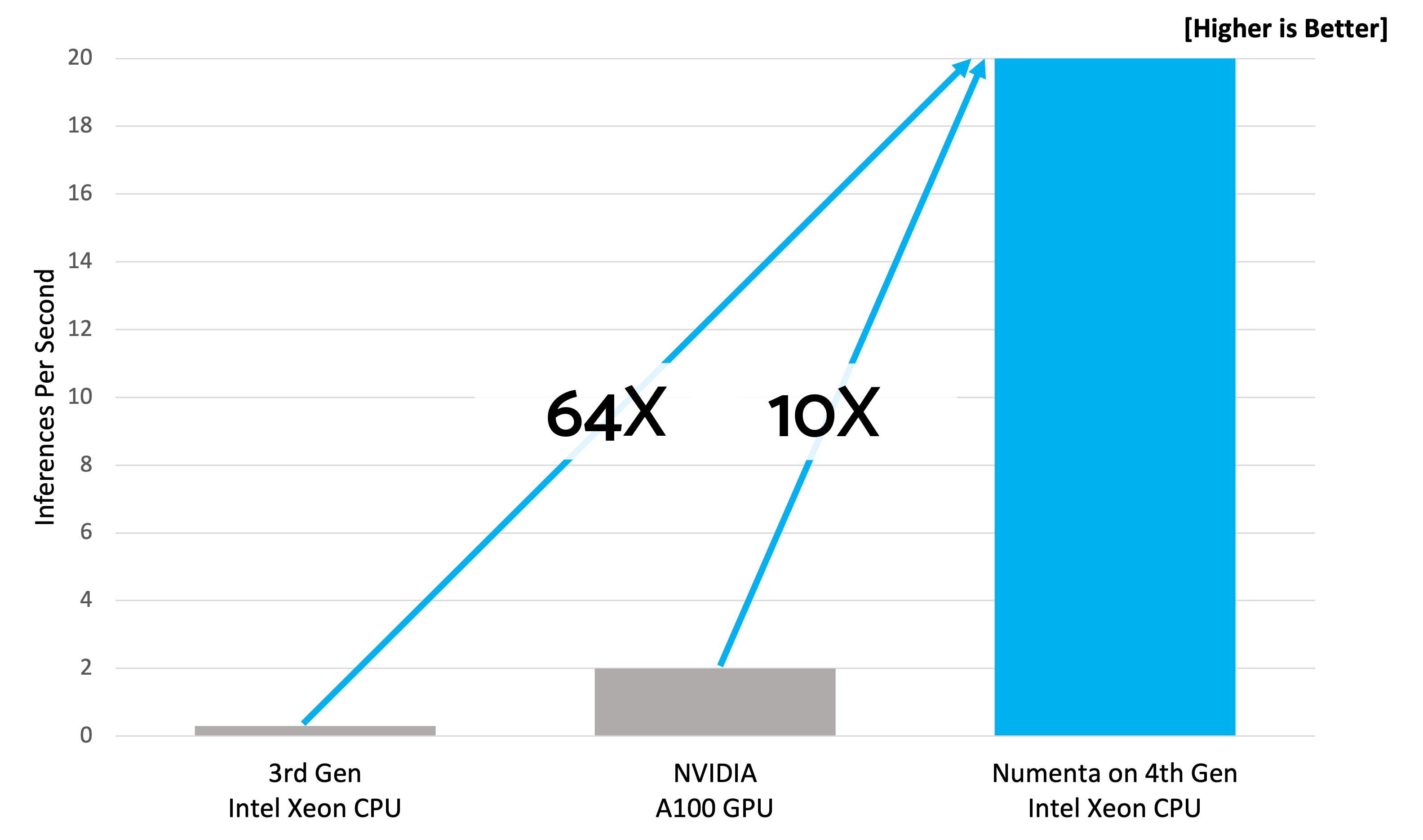

A Closer Look: Unparalleled Scaling on CPUsBuilding on our decades of neuroscience research, we’ve created unique architectures, data structures and algorithms that enable 10-150x cost and speed improvements across all LLMs, from BERT to GPT, on today’s CPUs. Being able to run these large models on CPUs reduces not only cost and energy usage but also the complexity of deployment for a company’s IT infrastructure.

|

Towards a more sustainable future

Continuing to build larger and more computationally intensive deep learning networks is not a sustainable path to building intelligent machines. At Numenta, we believe a brain-based approach is needed to build efficient and sustainable AI. We have to develop AI that works smarter, not harder.

The combination of fewer computes, fewer training samples, fewer training passes, and optimized hardware multiplies out to tremendous improvements in energy usage. If we had 10x fewer compute, 10x fewer training samples, 10x fewer training passes and 10x more efficient hardware, that would lead to a system that was 10,000x more efficient overall.

In the short term, we have created an AI platform that is designed to reduce energy consumption in inference on CPUs substantially. In the medium term, we are applying these techniques to training, and we expect even greater energy savings as we reduce the number of training passes needed. Over the long term, with enhanced hardware, we see the potential for thousandfold improvements.

Abstracting from the brain and applying the brain’s principles to current deep learning architectures can propel us towards a new paradigm in AI that is sustainable. If you’d like to learn more about our work on creating energy-efficient AI, check out our blogs below.

- The Path to Machine Intelligence: Classic AI vs. Deep Learning vs. Biological Approach

- Generative AI’s Hidden Secret – How to Accelerate GPT-scale Models for AI Inference

- Numenta and Intel Accelerate Inference 20x on Large Language Models

- Build and Scale Powerful NLP Applications with Numenta’s AI Platform