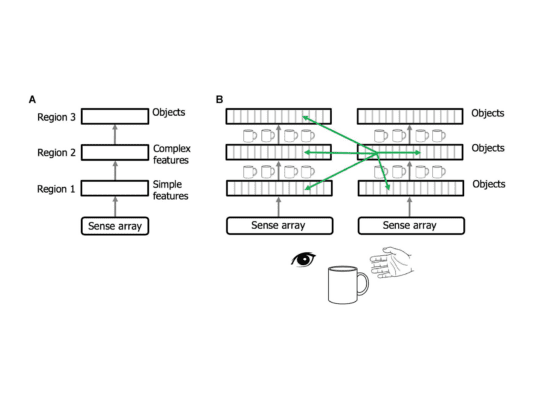

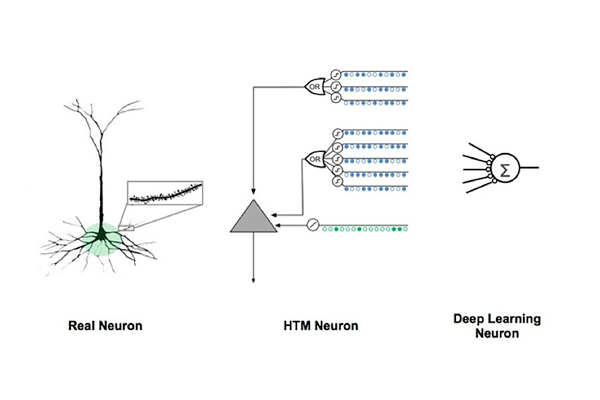

In 1955, Vernon Mountcastle proposed that the way we see, feel, hear, move, and even do high-level planning runs on the same cortical circuitry. A cortical column is the basic functional unit that is tightly replicated across the cortical sheet. Therefore, if we can understand a cortical column, we will understand the neocortex.

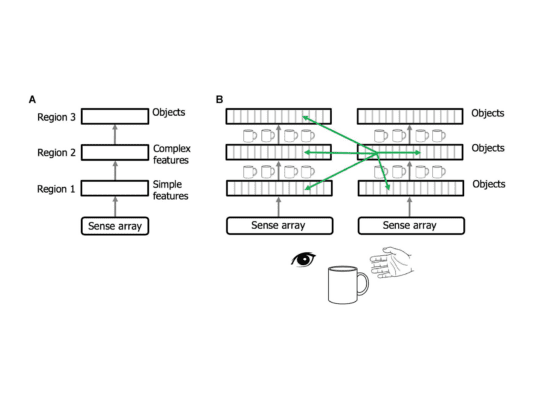

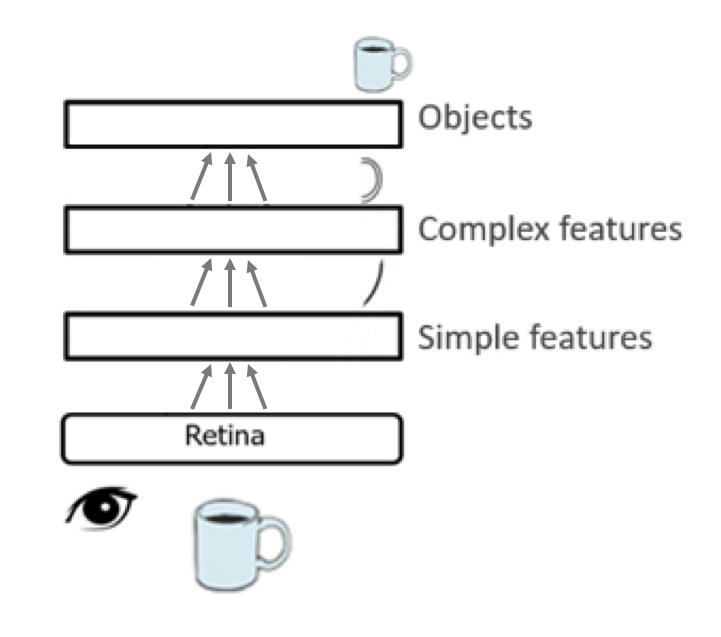

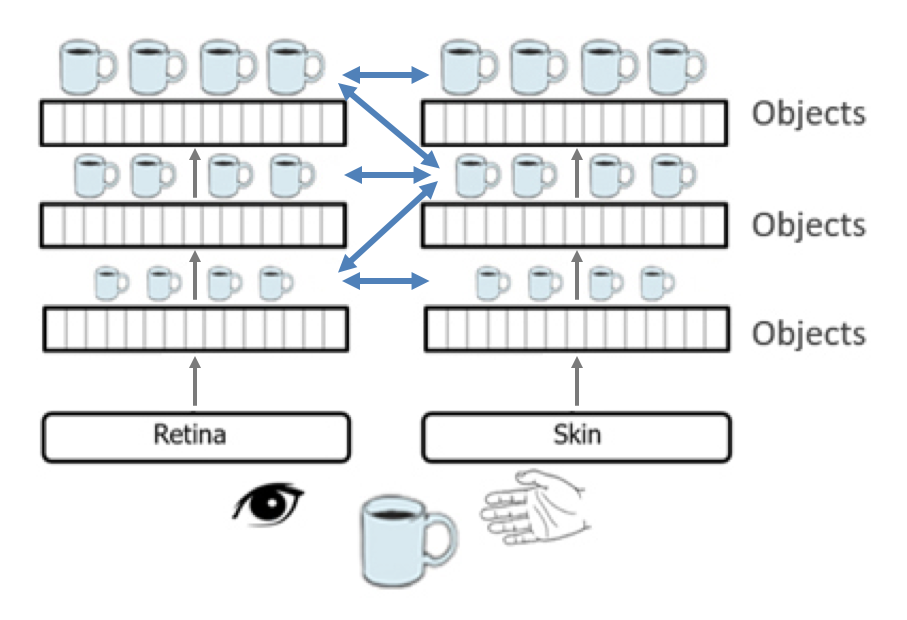

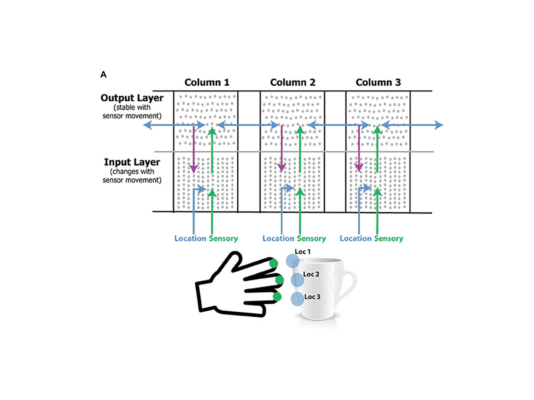

Based on Mountcastle’s proposal, the Thousand Brains Theory states that all cortical columns, even in low-level sensory regions, are capable of learning and recognizing complete objects through sensory inputs and movement. And they build tens of thousands of models for everything we know.

It’s as if your brain is actually thousands of brains working simultaneously.